post by Victor Ngo (2022 cohort)

My placement ran from July to September 2023, with Makers of Imaginary Worlds (MOIW), and involved planning and running a two-part study that would help inform my PhD research on ‘Artificial Intelligence and Robotics in Live Creative Installations’. More specifically, how children interact and form relationships with the robotic installation, how children understand and shape their interaction in this context, what meaning children attribute to the robot and their interaction, how curious children are during the interaction and what motivates their curiosity.

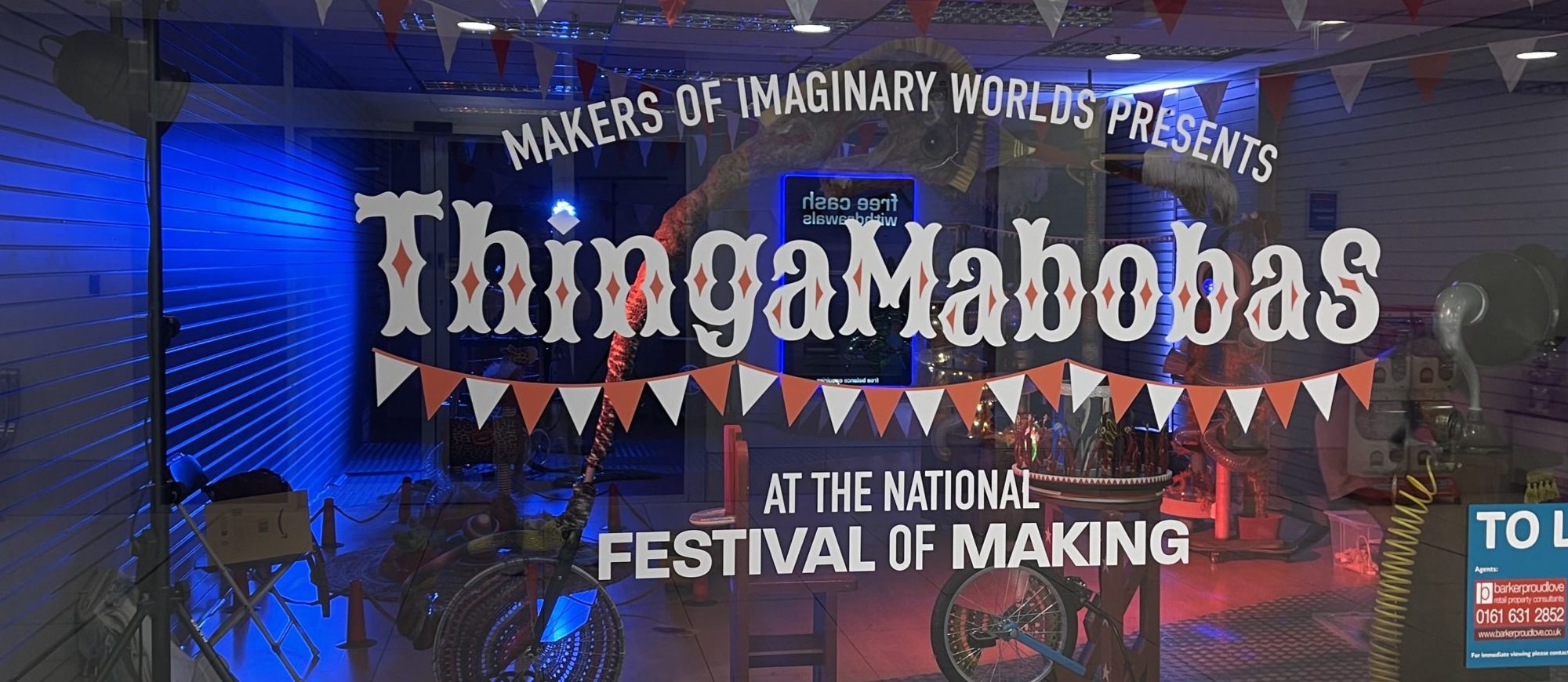

To add some background to my placement partner, MOIW are a Nottingham-based art company, who aim to create interactive and sensory experiences where children can play, engage and explore. MOIW’s first live robotics project, Thingamabobas, is aimed at younger audiences and involves the use of a computer vision-equipped robot arm, called NED (The Never-Ending Dancer), which can detect audience members and interact with them autonomously. This installation also includes a series of mechanical circus-like creations that are designed to enable children to interact with them as imaginative, dynamic sculptures that provide novel, enjoyable, and empowering experiences.

Unlike social robots, industrial robot arms typically have a functional design, void of humanoid features or facial expressions. MOIW aims to transform the industrial robot arm into a playful kinetic sculpture that defies expectations, offering an inventive interpretation. By introducing variables such as costume design, musical accompaniment, and contextual storytelling, the artists aim to redefine the perception of the robotic arm. Demonstrating how fiction can facilitate a willing suspension of disbelief among audiences, allowing viewers to trust and immerse themselves in reimagining a new reality.

The Study:

The first half of the study, part 1, was completed at the National Festival of Making, Blackburn, with a tremendous number of people attending and in general a great event! This half of the study explored the audience’s understanding of the initial robotic system, with no changes to the system’s capabilities or functionality. This allowed for a base understanding of its performance, capabilities, and limitations in the wild for a researcher new to the system, this proved extremely useful and provided me with the knowledge necessary to complete part 2. The second half of the study, part 2, was completed at the Mansfield Museum, Mansfield, exploring the same audience understanding, however, the system was altered to allow for 360-degree motion around the robot’s base as well as a different method of detecting the audience, moving to body pose detection from facial recognition. Although a direct comparison between the two studies is not possible, insights into audience responsiveness, engagement, and enjoyment are all possible and valuable to the discussion, and future development of this system or similar systems.

|  |

|  |

Reflections:

Over the course of three months, this placement has provided me with the opportunity to develop key interpersonal and professional skills, as well as improve my technical aptitude.

My initial discussions with MOIW for the placement were straight away met with enthusiasm and sometimes whimsical imagination from Roma Patel and Rachel Ramchurn, the artists of MOIW. Despite MOIW being a relatively small company, I was fortunate enough to learn the ins and outs of art installation production and how artists like Roma and Rachel turn ideas and imagination into professional productions, and how they deal with issues, changes and unexpected setbacks. Shadowing them allowed me to observe how they manage large-scale projects and interact with professional organisations. This has enabled me to further develop and improve my own understanding of professional engagement and project management.

Throughout all stages of the placement, the artists from MOIW frequently discussed possible alterations and upgrades with me to improve the interaction capabilities of the robot. Here I was able to apply and improve my technical experience, developing solutions to enhance the robot’s interaction capabilities or increase the system’s audience detection accuracy and reliability. Despite the technical success of some of the solutions developed, it is important to highlight that not all of the artist’s ideas were technically feasible or within the scope of the project. Communicating this effectively and managing the artist’s expectations was key to ensuring that both the robot was functional and ready for the study, and the professional relationship with MOIW was maintained, without either party being let down or led to believe the system was any greater or less than what was agreed on.

During the study, I was not only the lead researcher on site but also a range of other roles that sometimes required me to step out of my comfort zone. These included in no particular order of social or imaginative intensity; Thingamabobas Wrangler, Storyteller, Imagination Guide, The Researcher from Nottingham University, Technical Support.

As part 1 of the study at the National Festival of Making was a two-day event over the weekend during the height of the UK summer holiday, I was left with little choice but to quickly adapt to these new roles or suffer being swarmed by the thousands of curious and enthusiastic visitors that attended the event. To my surprise, and with a little help from Roma and Rachel, I was able to help children and adults alike be transported into the whimsical world of the Thingamabobas, for about 20 minutes at a time.

Overall, I thoroughly enjoyed the experience and opportunity to work with MOIW to not only develop an art installation, but to also help run it was a great privilege. The skills I have learned and applied in both professional engagements as well as in the wild will be beneficial to my PhD research and to myself as an individual.